The Ministry of Economy, Trade and Industry (METI) is a government agency responsible for the development of Japan’s economy and industry, as well as for ensuring the stable supply of mineral resources and energy. To advance efficiency and enhance its administrative operations, METI planned to introduce generative AI and selected ABeam Consulting as its partner. Following a verification study, development of the generative AI environment METI-LLM commenced in April 2024, and the service was launched within just three months. As of October 2025, METI-LLM has been used more than 1.5 million times by a cumulative total of approximately 7,000 users, representing nearly 60% of all METI staff.

With extremely high utilization and frequency of use, METI-LLM is becoming firmly established across the ministry. METI intends to pursue further improvements to METI-LLM in order to achieve greater efficiency and enhance its administrative operations.

Advancing efficiency and enhancing administrative operations through generative AI, building an AI environment with high security and usability informed by frontline feedback

- Government/Public Corporation

- AI

Challenge

- Introducing generative AI for greater efficiency and enhancement of administrative operations

ABeam Solution

- Supporting two projects for the introduction of generative AI: a verification study project and an implementation project

- Launching METI-LLM, a generative AI environment achieving both security and usability, within three months of the start of its construction

Success Factors

- As of October 2025, METI-LLM has been used more than 1.5 million times by a cumulative total of approximately 7,000 people, with a daily average of about 6,600 uses by approximately 900 users

- With extremely high utilization and frequency of use, METI-LLM is contributing to greater efficiency and enhancement of administrative operations

Project Background

Planning to use generative AI to improve operational efficiency and policymaking

Since fiscal 2023, the Ministry of Economy, Trade and Industry (METI) has been promoting organization-wide operational reform under its “Organizational Management Reform” initiative, in which the pursuit of operational efficiency is positioned as a key pillar. With numerous policy issues continually requiring attention, the collection and analysis of knowledge, idea generation, and the gathering of other information necessary for policymaking have had to be carried out manually, posing a significant challenge.

As generative AI began attracting attention in the latter half of 2022 and saw initial real-world deployment in the spring of 2023, METI began preparing for the introduction of generative AI. Using AI to handle certain tasks would enable staff expertise to be redirected toward addressing the more fundamental challenges facing the organization while also improving operational efficiency. “In government administration, errors are simply not permissible. It is essential to provide an environment in which staff can use generative AI with confidence, ensuring that neither security incidents nor misunderstandings or incorrect dissemination of information occur. We therefore began by examining how to establish a framework capable of delivering stable and reliable value,” says Mr. Masashi Osada, DX Manager, Digital Transformation Office, Minister’s Secretariat, METI.

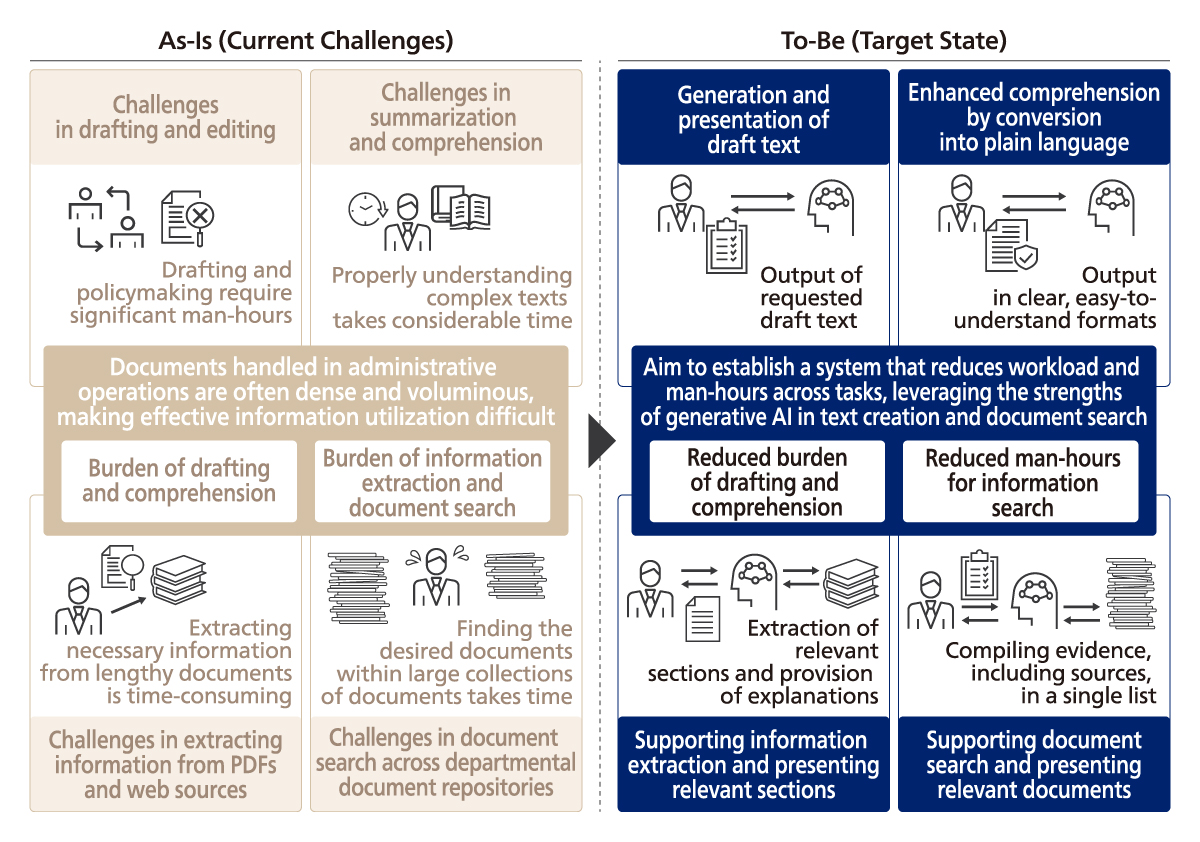

To effectively utilize generative AI, it is necessary to identify the tasks for which it is best suited. METI therefore conducted a thorough review of staff operations, identified tasks with high potential for substitution by generative AI, and proceeded to verify the effectiveness of its application. “First, through interviews, we clarified which tasks staff were spending the most time on and where the workload was greatest, and we categorized those tasks accordingly. Based on the findings of the survey, we then developed a verification initiative for the use of generative AI,” says Mr. Yuto Ishii, Assistant Director, Digital Transformation Office, Minister’s Secretariat, METI.

ABeam worked closely with us from verification of generative AI through to its implementation and use within METI.

DX Manager

Digital Transformation Office

Minister’s Secretariat

METI

Mr. Masashi Osada

Project’s goals, challenges, and solutions

Clarifying the decision-making requirements for implementing generative AI, step by step

In the verification study, interviews were conducted with staff in each section to gather insights into their expectations for generative AI and the work-related challenges they face. By carefully listening to staff, the team analyzed and identified use cases grounded in frontline operations. Based on these findings, tasks were classified into nine categories—inquiry handling, drafting, summarization, translation, issue identification, sounding board discussions, code generation, case collection, and data analysis—and it was hypothesized that these tasks are particularly well suited to the use of generative AI.

“In the course of verifying the introduction of generative AI, one of our major roles was to accurately organize the feedback received from METI staff so that Mr. Osada and Mr. Ishii could make well-informed decisions. In classifying the work, we conducted detailed interviews on the tasks actually being performed and then gradually abstracted them into broader categories. It was a painstaking process, but we worked through the prerequisites for the necessary decision-making toward the implementation of generative AI, one by one,” recalls Takashi Kuwabara, Manager, Artificial Intelligence Leap Sector, Digital Technology Business Unit, ABeam Consulting.

Following these efforts, METI began developing a security-focused system to ensure that staff could use generative AI with confidence. At that time, in 2023, the broader adoption of generative AI had only just begun, and there were no clear de facto standards or established reference cases. Accordingly, 150 participating staff members were asked to use generative AI in their work; their usage was logged, and their evaluations were collected through questionnaires. Responding directly to staff feedback, METI then moved forward with building a generative AI testing environment optimized for operational needs and continued validating relevant use cases.

“As generative AI became a major focus of attention, many staff members had high expectations that it would enable them to perform a wide range of tasks with considerable flexibility. While carefully balancing expectations with practical realities, we assessed which tasks were suitable for the introduction of generative AI and which were not, and moved forward with preparations for implementation while ensuring staff understanding,” recalls Mr. Ishii.

As METI staff are caught up in their day-to-day responsibilities, it is not easy for them to step back and objectively review workflows. In this context, ABeam, acting as a consultant, supported the structuring of these workflows and, based on the findings, examined and proposed approaches to improving operational efficiency through the use of generative AI.

“Rather than approaching the challenges faced by METI simply as a contractor, ABeam engaged with the ministry from the same perspective as METI’s staff, which allowed the verification process to proceed smoothly,” says Mr. Osada. At the same time, during system implementation, ABeam rapidly developed and delivered prototypes, incorporated feedback from users participating in the verification process, and repeatably refined the system by introducing new ideas. As a result, the system was implemented to a very high standard.

We will continue to examine and promote measures for greater operational efficiency and enhancement of policymaking through the use of generative AI.

Assistant Director

Digital Transformation Office

Minister’s Secretariat

METI

Mr. Yuto Ishii

Results and future prospects

Promoting continuous improvement for greater efficiency and enhancement of administrative operations

METI decided to introduce generative AI at the ministry-wide level in fiscal 2024, as the verification study concluded that generative AI could be effectively utilized across the ministry’s operations. Development of the generative AI environment, METI-LLM, began in April 2024, and it went live at the end of June—just three months later.

In establishing an environment within a short timeframe that would allow all staff to use generative AI, the key challenge was to achieve both security and usability. Accordingly, in developing METI-LLM, the highest priority was placed on ensuring security. At the same time, efforts were made to create a safe and secure environment while also designing a user-friendly interface.

“We believed that, in order to encourage widespread use among staff, visual appeal was also important, and we refined the design to achieve this. At the same time, METI’s information systems had numerous security requirements specific to the ministry. From the shared perspectives of both METI and ABeam, we carefully worked through each of these requirements” says Kuwabara.

At METI, multiple briefing sessions were held for staff to introduce METI-LLM and provide guidance on its use. After the system went live, usage was closely monitored. As it became clear that staff were actively adopting the tool, the team explored areas for improvement, introducing new functions and enhancing usability. As a result, four major version upgrades have been implemented. By October 2025, nearly 60% of all METI staff—approximately 7,000 people—had used METI-LLM a cumulative total of more than 1.5 million times, with around 900 users per day generating over 6,600 uses on average.

“The use of generative AI within the ministry has clearly taken root. In our survey, we received comments such as, ‘It helped me identify leads for policy development,’ and ‘It has become indispensable in my work.’ METI-LLM is now an extremely important tool for our staff,” says Mr. Ishii.

METI plans to organize and structure its internal data so that documents are stored in formats that generative AI can readily process, enhance staff literacy to ensure the proper use of generative AI, and continue improving the METI-LLM system currently in operation. Through these initiatives, METI aims to further expand the use of generative AI for greater efficiency and enhancement of its administrative operations.

We supported METI in linking its organizational data strategy to the product and delivered a platform that contributes to operational efficiency. We will continue to make proposals to further enhance the value of METI-LLM within METI.

Manager

Artificial Intelligence Leap Sector

Digital Technology Business Unit

ABeam Consulting

Takashi Kuwabara

As-Is / To-Be Use Cases Where Generative AI Is Expected to Deliver Value

As-Is / To-Be Use Cases Where Generative AI Is Expected to Deliver Value

Screenshot of METI-LLM

Screenshot of METI-LLM

Customer Profile

- Company name

- Ministry of Economy, Trade and Industry

- HQ Location

- 1-3-1 Kasumigaseki, Chiyoda-ku, Tokyo

- Estd.

- 1949

- Business

- Government agency responsible for development of Japan’s economy and industry and for securing the supply of energy and mineral resources

Click here for inquiries and consultations